When you start with Hive on Hadoop clear majority of samples and tutorials will have you work with text files. However, some time ago disadvantages of text files as file format were clearly seen by Hive community in terms of storage efficiency and performance.

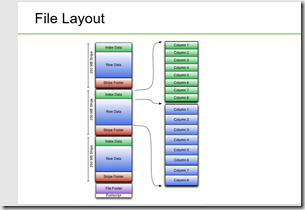

First move to better columnar storage was introduction to Hive RC File Format. RCFile (Record Columnar File) is a data placement structure designed for MapReduce-based data warehouse systems. Hive added the RCFile format in version 0.6.0. RCFile stores table data in a flat file consisting of binary key/value pairs. It first partitions rows horizontally into row splits, and then it vertically partitions each row split in a columnar way. RCFile stores the metadata of a row split as the key part of a record, and all the data of a row split as the value part. Internals for RC File Format can be found in JavaDoc here – http://hive.apache.org/javadocs/r1.0.1/api/org/apache/hadoop/hive/ql/io/RCFile.html. What is important to note is why it was introduced as far as advantages:

- As row-store, RCFile guarantees that data in the same row are located in the same node

- As column-store, RCFile can exploit column-wise data compression and skip unnecessary column reads.

As time passed by explosion of data and need for higher speed in HiveQL queries has pushed need for further optimized columnar storage file formats. Therefore, ORC File Format was introduced. The Optimized Row Columnar (ORC) file format provides a highly efficient way to store Hive data. It was designed to overcome limitations of the other Hive file formats. Using ORC files improves performance when Hive is reading, writing, and processing data

This has following advantages over RCFile format:

- a single file as the output of each task, which reduces the NameNode’s load

- light-weight indexes stored within the file, allowing to skip row groups that don’t pass predicate filtering and do seek to a given row

- block-mode compression based on data type

An ORC file contains groups of row data called stripes, along with auxiliary information in a file footer. At the end of the file a postscript holds compression parameters and the size of the compressed footer.

The default stripe size is 250 MB. Large stripe sizes enable large, efficient reads from HDFS.

The file footer contains a list of stripes in the file, the number of rows per stripe, and each column’s data type. It also contains column-level aggregates count, min, max, and sum.

What does it all mean for me?

What it means for us as implementers following:

- Better read performance due to compression. Streams are compressed using a codec, which is specified as a table property for all streams in that table. To optimize memory use, compression is done incrementally as each block is produced. Compressed blocks can be jumped over without first having to be decompressed for scanning. Positions in the stream are represented by a block start location and an offset into the block.

- · Introduction to column-level statistics for optimization, feature that long existed in pretty much all commercial RDBMS packages (Oracle, SQL Server, etc.) . The goal of the column statistics is that for each column, the writer records the count and depending on the type other useful fields. For most of the primitive types, it records the minimum and maximum

values; and for numeric types it additionally stores the sum. From Hive 1.1.0 onwards, the column statistics will also record if there are any null values within the row group by setting the hasNull flag. - · Light weight indexing

- Larger Blocks by default 256 MB

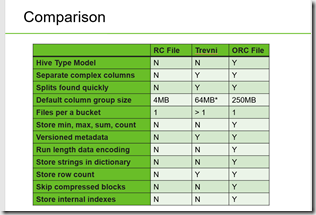

Here is a good Hive file format comparison from HOrtonworks:

Using ORC – create table with ORC format:

Simplest way to create ORC file formatted Hive table is to add STORED AS ORC to Hive CREATE TABLE statement like:

CREATE TABLE my_table ( column1 STRING, column2 STRING, column3 INT, column4 INT ) STORED AS ORC;

ORC File Format can be used together with Hive Partitioning, which I explained in my previous post. Here is an example of using Hive partitioning with ORC File Format:

CREATE TABLE airanalytics

(flightdate date ,dayofweek int,depttime int,crsdepttime int,arrtime int,crsarrtime int,uniquecarrier varchar(10),flightno int,tailnum int,aet int,cet int,airtime int,arrdelay int,depdelay int,origin varchar(5),dest varchar(5),distance int,taxin int,taxout int,cancelled int,cancelcode int,diverted string,carrdelay string,weatherdelay string,securtydelay string,cadelay string,lateaircraft string)

PARTITIONED BY (flight_year String)

clustered BY (uniquecarrier)

sorted BY (flightdate)

INTO 24 buckets

stored AS orc tblproperties ("orc.compress"="NONE","orc.stripe.size"="67108864", "orc.row.index.stride"="25000")

The parameters added on table level are as per docs:

|

Key |

Default |

Notes |

|

orc.compress |

ZLIB |

high level compression (one of NONE, ZLIB, SNAPPY) |

|

orc.compress.size |

262,144 |

number of bytes in each compression chunk |

|

orc.stripe.size |

268435456 |

number of bytes in each stripe |

|

orc.row.index.stride |

10,000 |

number of rows between index entries (must be >= 1000) |

|

orc.create.index |

true |

whether to create row indexes |

|

orc.bloom.filter.columns |

“” |

comma separated list of column names for which bloom filter should be created |

|

orc.bloom.filter.fpp |

0.05 |

false positive probability for bloom filter (must >0.0 and <1.0) |

If you have existing Hive table, it can be moved to ORC via:

- ALTER TABLE … [PARTITION partition_spec] SET FILEFORMAT ORC

- SET ive.default.fileformat=Orc

For more information see – https://en.wikipedia.org/wiki/RCFile, https://cwiki.apache.org/confluence/display/Hive/LanguageManual+ORC, https://cwiki.apache.org/confluence/display/Hive/FileFormats, https://orc.apache.org/docs/hive-config.html

Hope this helps.