If you have worked on Linux and were interested in NoSQL you probably already heard of Redis. Redis is a data structure server. It is open-source, networked, in-memory, and stores keys with optional durability. The development of Redis has been sponsored by Pivotal Software since May 2013; before that, it was sponsored by VMware. According to the monthly ranking by DB-Engines.com, Redis is the most popular key-value store. The name Redis means REmote DIctionary Server. I have heard people refer to Redis as NoSQL data store, since it provides the feature saving your data into disk. I have heard people refer to it as distributed cache, as it provides in-memory key-value data store. And someone categorized it to the distributed queue, since it supports storing your data into hash and list type and provides the enqueue, dequeue and pub/sub functionalities. So we are talking about a very powerful product here as you can see. Unfortunately while available and fully supported on Linux platform for some time, Redis itself doesn’t officially support Windows. Fortunately Microsoft Open Technologies (http://msopentech.com/) created a port of Redis that runs on Windows and can be downloaded from Git here – https://github.com/MSOpenTech/Redis. I actually installed this port on my laptop a bit ago, however just found some time to explore it now. Unfortunately looks like folks at Redis are not interested in merging any Windows based code patches into main branch, so at this time and foreseeable future MSOpenTech port will be on its own – http://oldblog.antirez.com/post/redis-win32-msft-patch.html.

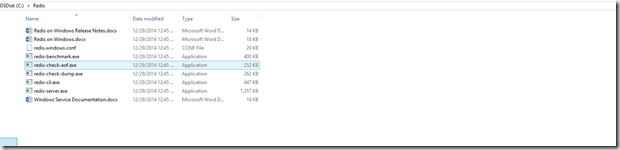

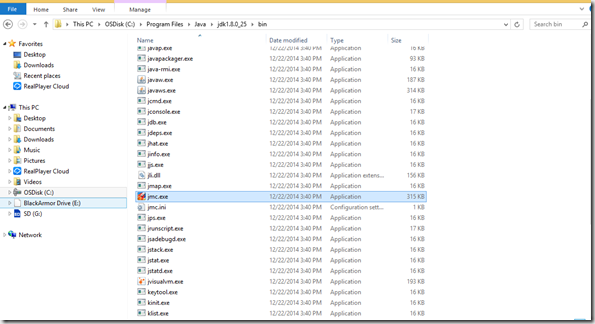

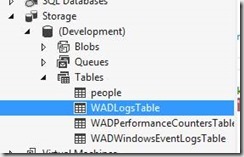

After you install and build Redis on Windows using Visual Studio you should see something like this in your Redis folder

This should create the following executables in the msvs\$(Target)\$(Configuration) folder:

- redis-server.exe

- redis-benchmark.exe

- redis-cli.exe

- redis-check-dump.exe

- redis-check-aof.exe

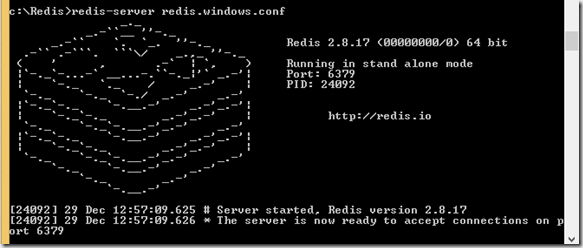

The simplest way to start a Redis server is just to open a command windows and go to this folder, execute the redis-server.exe then you can see the Redis is now running

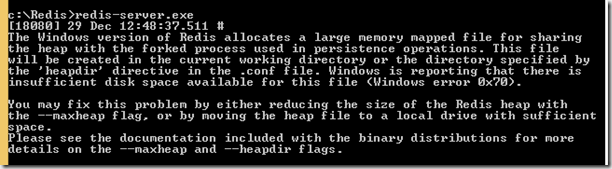

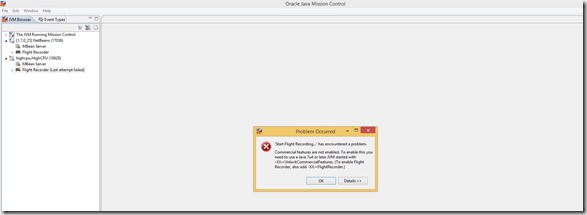

I actually ran into an issue during this step. As I started Redis I immediately saw an error like this:

So I had to find a configuration file – redis.windows.conf. There I uncommented maxmemory parameter and set it to 256 MB. Why?

The maxheap flag controls the maximum size of this memory mapped file,

as well as the total usable space for the Redis heap. Running Redis

without either maxheap or maxmemory will result in a memory mapped file

being created that is equal to the size of physical memory. During

fork() operations the total page file commit will max out at around:

(size of physical memory) + (2 * size of maxheap)

For instance, on a machine with 8GB of physical RAM, the max page file

commit with the default maxheap size will be (8)+(2*8) GB , or 24GB. The

default page file sizing of Windows will allow for this without having

to reconfigure the system. Larger heap sizes are possible, but the maximum

page file size will have to be increased accordingly.

The Redis heap must be larger than the value specified by the maxmemory

flag, as the heap allocator has its own memory requirements and

fragmentation of the heap is inevitable. If only the maxmemory flag is

specified, maxheap will be set at 1.5*maxmemory. If the maxheap flag is

specified along with maxmemory, the maxheap flag will be automatically

increased if it is smaller than 1.5*maxmemory.

So here comes the curse of modern laptop with small SSD drive. I only have about 15 GB free on my hard disk and 32 GB RAM. Obviously default behavior here of creating memory mapped file size of my RAM will not work, so I cut maxmemory used here accordingly.

To do anything useful here in console mode we actually have to start Redis Console – redis-cli.exe. Redis has the same basic concept of a database that you are already familiar with. A database contains a set of data.The typical use-case for a database is to group all of an application’s data together and to keep it separate from another application’s. In Redis, databases are simply identified by a number with the default database being number 0. If you want to change to a different database you can do so via the select command.

c:\Redis>redis-cli.exe 127.0.0.1:6379> select 0 OK 127.0.0.1:6379>

While Redis is more than just a key-value store, at its core, every one of Redis’ five data structures has at least a key and a value. It’s imperative that we understand keys and values before moving on to other available pieces of information. I will not go into detail on concept of key-value store here, but lets use redis-cli to add a key-value set and retrieve it via console. To add item I will use set command:

c:\Redis>redis-cli.exe

127.0.0.1:6379> select 0

OK

127.0.0.1:6379> set users:gennadyk '("name";"GennadyK","counry","US")'

OK

So I added an item into users with gennadyk as a key. Next I will use get to retrieve my value:

127.0.0.1:6379> get users:gennadyk "(\"name\";\"GennadyK\",\"counry\",\"US\")" 127.0.0.1:6379>

Next lets see all of my keys present:

127.0.0.1:6379> keys * 1) "users:gennadyk" 127.0.0.1:6379>

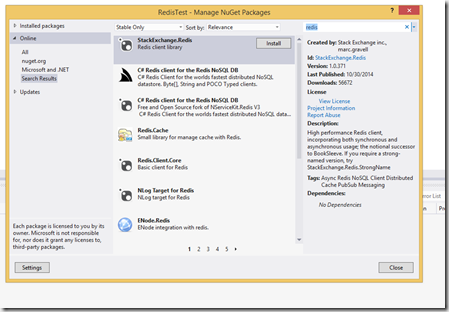

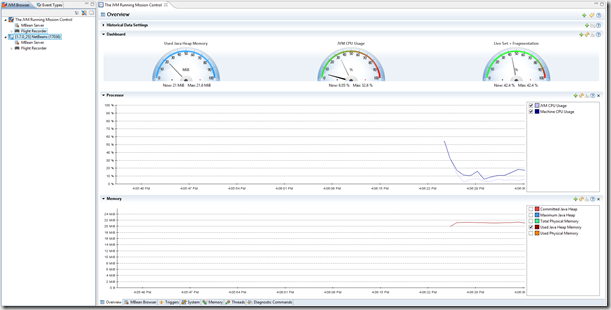

Now that’s basics are done lets create a simple C# application to work with Redis here. I fired up my VS and started a small Windows console application project named non-surprisingly as Redis test. Next I will go to Manage NuGet packages and pick a client, in my case I will pick StackExchange Redis client library.

Just hit Install and you are done here. The code below is pretty simple, but illustrates setting key\value pair strings in Redis and retrieving those as well:

namespace RedisTest

{

class Program

{

static void Main(string[] args)

{

ConnectionMultiplexer redis = ConnectionMultiplexer.Connect("localhost");

IDatabase db = redis.GetDatabase(0);

int counter;

string value;

string key;

//Create 5 users and put these into Redis

for (counter = 0; counter < 4; counter++)

{

value = "user" + counter.ToString();

key = "5676" + counter.ToString();

db.StringSet(key,value);

}

// Retrieve keys\values from Redis

for (counter = 0; counter < 4; counter++)

{

key = "5676" + counter.ToString();

value = db.StringGet(key);

System.Console.Out.WriteLine(key + "," + value);

}

System.Console.ReadLine();

}

}

}

And here is output

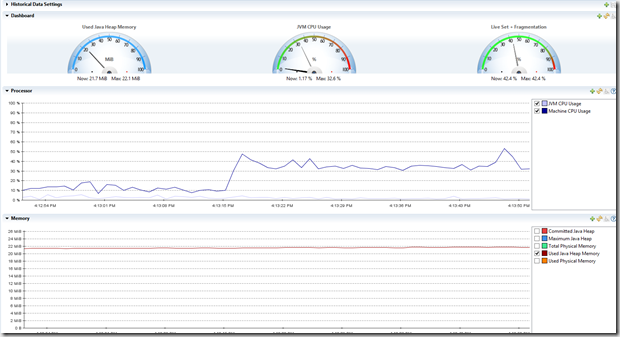

Looking at the code, central object in StackExchange.Redis is the ConnectionMultiplexer class in the StackExchange.Redis namespace; this is the object that hides away the details of multiple servers. Because the ConnectionMultiplexer does a lot, it is designed to be shared and reused between callers. You should not create a ConnectionMultiplexer per operation. Situation here is very similar to DataCacheFactory with Microsoft Windows AppFabricCache NoSQL client – cache and reuse that ConnectionMultiplexer.

A normal production scenario might involve a master/slave distributed data store setup; for this usage, simply specify all the desired nodes that make up that logical redis tier (it will automatically identify the master):

ConnectionMultiplexer redis = ConnectionMultiplexer.Connect("myserver1:6379,server2:6379");

Rest is even easier. Next I connect to the database (in my case default ) via GetDatabase call. After that I set 5 key\value pair items making sure keys are unique by incrementing these and retrieve these values in the loop based on the key.

Checking through redis-cli on my server I can see these values now:

127.0.0.1:6379> keys * 1) "56760" 2) "56761" 3) "users:gennadyk" 4) "56763" 5) "56762" 127.0.0.1:6379>

Some other interesting things that I learned, especially around configuration. I already mentioned maxheap and maxmemory parameters.

| Parameter | Explanation | Default value |

| Port | Listening Port | 6379 |

| Bind | Bind Host IP | 127.0.0.1 |

| Timeout | Timeout connection | 300 sec |

| loglevel | logging level, there are four values, debug, verbose, notice, warning |

verbose |

| logfile | log mode | stdout |

Hope this helps. For more see – http://www.databaseskill.com/645056/, http://stevenmaglio.blogspot.com/2014/10/quick-redis-with-powershell.html, https://github.com/StackExchange/StackExchange.Redis